I was in the staff room a few weeks ago looking at a book that someone had left on one of the tables. You will probably want to know the title and the author, but I am afraid I can’t remember. Actually, that is not really relevant because the part that I want to write about is a common view expressed in number of serious educational books, articles and blogs. Indeed, if I did remember the title I would probably not want to give it to you because you might read the book and that could be dangerous! You might become convinced by the underlying hypothesis or just depressed if you don’t. I think I lie in the latter camp. Hence this article.

The book was one of those that claims to confront the various spurious bits of advice that teachers get thrown at them and respond to them with the facts! There is nothing wrong with this in principle, of course, but there are certain assumptions that hide behind the facts that makes them “facts”. The bit that I took issue with concerned groupwork. The author commented on how teachers are always told that encouraging students to work in groups is a good thing. He wanted to reassure teachers that this was not true. The evidence, he claimed was that groupwork was not beneficial to learning, and so teachers should not be worried if they didn’t do much of it.

This argument goes even further in some quarters, where it is pointed out that the level of what students do as a group is often lower than when they work individually. It is also pointed out that genuine assessment is difficult with a group because you cannot often ascertain what a particular individual has contributed to the collective. Some students end up doing most of the work and others do very little. This last one often comes from parents, always the parents of the children who do all the work curiously. They will point out how hard their child is working, and how their marks are reduced due to the lack of effort from others, and that their child is probably being held back by all this groupwork nonsense. Strangely the child in question is often particularly gifted as well.

So, with all this stacked up against it, why would anyone want kids to learn in a group? The short answer is to ask “What if the thing you actually wanted them to learn is the skill of collaboration?” Seen through this lens, all the evidence that indicates that groupwork doesn’t work, that you can’t assess it and kids don’t do it very well, indicates that you should be doing more of it and teaching them how to do it rather than avoiding it.

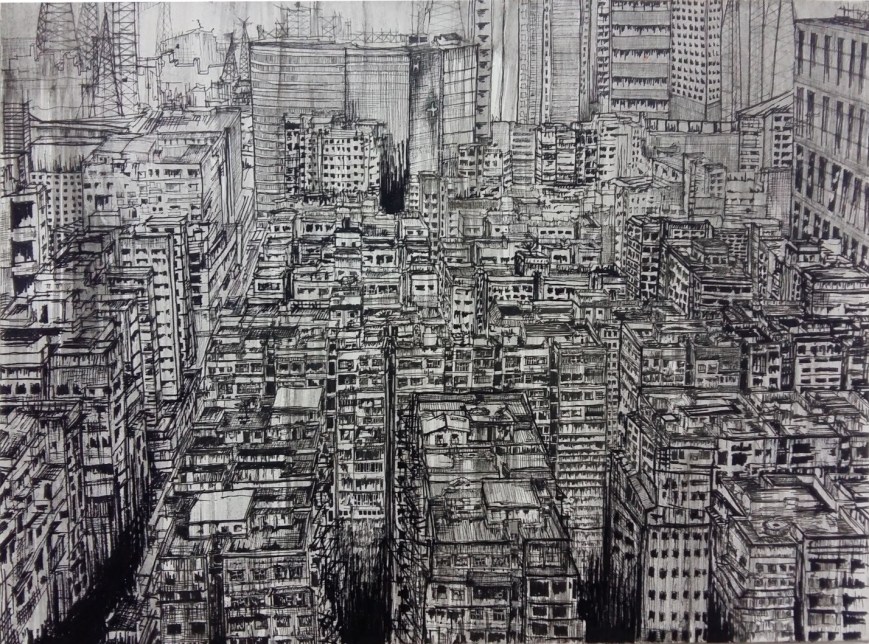

The thing is that we know collaboration is important, probably more important than whatever it is that the kids are collaborating over. In nearly all walks of life working in a team or a group is a key to success. If you ask potential employers what they want their future employees to be able to do, collaboration, or working effectively in a team, always comes up high on the list. (See this report.) OK, there are the occasional Picassos and Einsteins, but they are outnumbered by Jobs and Wozniak, Lennon and McCartney, Crick and Watson and Marks and Spencer (!). As humans we are social animals. Doing stuff with others is a large part of what makes us human. At school, we should be teaching that in all parts of the curriculum, not just on the football team or in the orchestra.

So we should actively teach collaboration, and equip our students with the skills to turn a dysfunctional collaborative group into a high functioning one. How do we do this?

I want to suggest a process which I call “Learning through talking about it”. I probably need to come up with a better name, but the idea is that if we are continually aware of what we are doing we tend to get better at it. The deeper the awareness, the deeper the learning. This very much applied to the so called soft skills, of which collaboration is one. Formative assessment tends to be the process by which we encourage awareness, but it is the self assessment aspect that is the vital part. The children themselves must know themselves and have a language to express the knowledge.

So, what we need to do is to give the various roles that a group takes and that individuals take within a group names. So that children can recognise what they are doing and what the group is doing. For example, if a particular group project has a period of divergent thinking, where the members contribute ideas that are “out of the box”, the students should identify that this is the out-of-the-box phase. Or the task may be divided into roles, so that one takes on the role of the out-of-the-boxer, while another becomes the reality-checker, who steps in to help converge all the ideas to a single path forward. It is important that students, in reflecting on the group’s effectiveness, say what they did in these phases or who took up which role. There are models of the various roles that a group or person in a group can take on. Students should take different roles at different times.

An old friend of mine, Gilbert Halcrow, led an investigation into this where he came up with a series of dispositions that applied to collaboration. He divided them into two groups.

There is an excellent original article by Gilbert Halcrow, from a few years ago which goes into the creation of the dispositions, their rationale and crucially, their use. I recommend it strongly. His examples are drawn from Theatre but are applicable elsewhere.

Reflection, after a collaboration, includes answering questions such as “Who contributed what?” and “What could I have done to ensure better contribution from others?”. What you find is that students become good at including others, at encouraging others and, if someone cannot cope with their role, modifying it so it is achievable. My suspicion is that mixed age groups encourage this to work smoothly, because there is a natural hierarchy that allows the hopefully more experienced older students to goad the younger ones along.

So, if collaboration is important and we need to teach and encourage groupwork, what was the flaw in the article in the book that told us to avoid it?

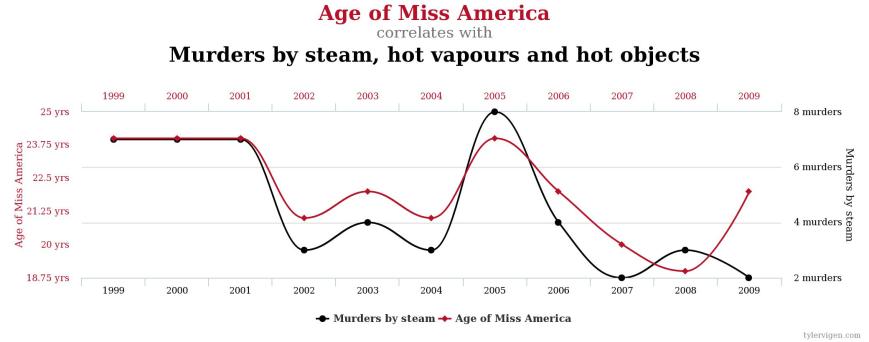

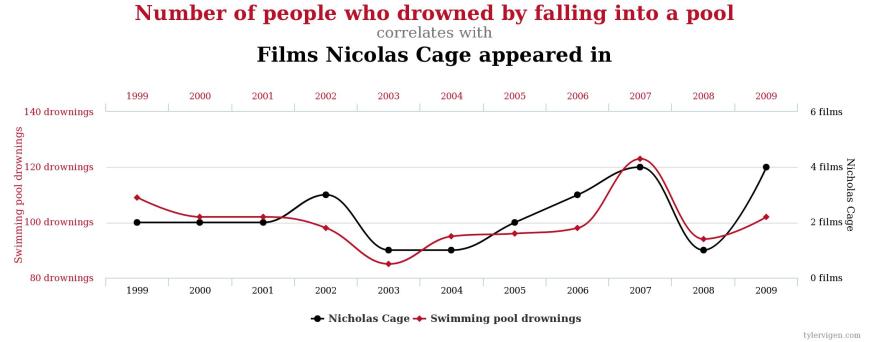

You see, under the hood of the “don’t work in groups” argument, is the assumption that we have all agreed what education is for, that it can be measured and that the results in the measurable things are the only goals worth pursuing. I am reminded of the quote about “not everything of value can be measured and not everything that can be measured has value” which is attributed to a variety of people including in some form, apparently erroneously, to Albert Einstein. I have written before about the dangers of relying on data, but this post is more about what the motivation for the conclusion about groupwork.

This depressing view of education starts from the seemingly innocuous premise that learning is “a change in long term memory”. There is nothing inherently wrong with the statement as long as we agree what we understand by memory. If we are not careful we equate memory with recall, and so we assess the success of education by measuring recall with tests. It all becomes very self fulfilling. If we limit the measure of success in education to being able to re-do techniques they have been taught, then direct teaching of these techniques is going to work best. You get the same argument against open ended problems and learning through discovery. The evidence, they say, shows it doesn’t work as well as direct instruction. But if what you want the students to learn is how to confront an open ended problem or discover something original, then the direct instruction argument fails.

It was interesting that the discussions we had with parents last year at Markham College demonstrated they were much more concerned about values education that the recall of anything. They wanted their children to show empathy and respect, to care for themselves and others and to be happy. You could make an argument that learning how to have empathy is a change in memory, but it is not what people generally understand by memory. It could, I suppose, come under a broader definition of learning as a change in behaviour, but how it gets measured is a serious problem. Whether you could include being happy as a change in behaviour I am not so sure. I would suggest teaching collaboration helps with a great number of these values.

Education has to be about so much more than the measurable. It is about passion and joy and what it means to be human. Let’s celebrate that in out students

Originally published in the Markham College Teaching and Learning Blog here.